Dear Friends & Partners,

Our investment returns are summarized in the table below:

|

Strategy |

Month |

YTD |

12 Months |

24 Months |

36 Months |

Inception |

|

LRT Global Opportunities |

-3.31% |

+8.71% |

-9.14% |

+0.25% |

-6.81% |

+18.76% |

|

Results as of 7/31/2024. Periods longer than one year are annualized. All results are net of all fees and expenses. Past returns are no guarantee of future results. Please see the end of this letter for additional disclosures. |

LRT Global Opportunities is a systematic long/ short strategy that seeks to generate positive returns while controlling downside risks and maintaining a low net exposure to the equity markets.

For the month of July, the strategy was down -3.31%, bringing overall results to +8.71% for the year. All results are net of fees. Beta-adjusted net exposure was 13.04% at month end. The attribution of July’s return was 0.17% from market beta, and -3.48% from our alpha generation.1 Our longs contributed to performance but were sharply offset by our shorts. Top gainers on the long side included Colliers International Group Inc. (CIGI), Asbury Automotive Group, Inc. (ABG), CSW Industrials, Inc. (CSWI), Simpson Manufacturing Co., Inc. (SSD) and Exponent Inc. (EXPO) partially offset by losses on The Trade Desk, Inc. (TTD), Booz Allen Hamilton Holding Corporation (BAH), and Taiwan Semiconductor Manufacturing Company Limited (TSM). See the appendix for additional disclosures. We continue to be cautious on the overall market as evidenced by our current low market beta.

This month’s performance was negatively affected by a highly unusual short-covering rally in small-cap stocks which disproportionately affected the strategy’s performance. Small-cap bank stocks rallied strongly during the month after the release of a key inflation report that appeared to show inflation declining more than expected. However, over the past three days, this has largely reversed as have the temporary losses we incurred during July. The first few days of August have been very strong from a performance perspective, and I believe that our strategy is on track for a strong rest of the year. We remain invested in very high quality companies that are performing very well yet have been largely ignored over the past six months as they have not been a part of the AI mania sweeping the markets. We believe this may change very soon as the AI bubble appears on the verge of popping.

AI: Fakes, False Promises and Frauds

Currently, we are in the midst of an AI frenzy, akin to a bubble, if you will. This kind of behavior has been observed before in the markets, particularly during the late 1990s with the dot-com bubble. At the time, investors were drawn to any company associated with the internet, even if it meant adding “.com” to their name[1] or announcing the existence of a website, which would cause their stock prices to skyrocket. Companies were able to raise substantial amounts of money based on simple PowerPoint presentations. The typical joke was that all you needed was two guys and a PowerPoint presentation to raise $10 billion dollars to venture capitalists.

However, we are all familiar with what happened at the end of that bubble. The first companies involved with the internet were legitimate enterprises, and the growth of the internet and technology was tangible. Unfortunately, as enthusiasm for technology progressed, the focus shifted from real and impactful change and real customers to hype, speculation, and eventually fraud.

This pattern has repeated itself more recently with cryptocurrencies and blockchain technology. Companies were eager to seize the day, claiming to be blockchain pioneers and asserting their potential to revolutionize industries. Take, for example, the Long Island Iced Tea company, which changed its name to Long Blockchain Corp, and its stock price surged 200% in response to the hype.3 This kind of frenzy and speculation is now pervasive in the AI technology space, especially with large language models, particularly those based on the “transformer paradigm”4, which have seen incredible advancements since the launch of ChatGPT 2.0, more than two and a half years ago. It’s understandable that I approach this with more skepticism.

In my view, artificial intelligence (‘AI’) is currently in a bubble, characterized by overhyped and oversold claims. The sheer number of promises made has led me to believe that most of the purported uses of AI will either fail to deliver as promised or prove too expensive for companies to be cost-effective. This is due to the excessive consumption of computing power, energy, and the inherent unreliability of these technologies. The practical applications of AI and large language models are scarce, with the primary use cases being summarizing meeting notes, generating reports, and assisting with text rephrasing and a bit of computer coding. I am highly skeptical that AI and large language models will provide any significant value that matches the hype surrounding them.

The market is currently in a reflexive state, where initial truths are distorted through hype, leading to fraud and deception. This is reminiscent of the dot-com bubble, where companies like Enron and WorldCom, promising to build the internet’s infrastructure, but ended up being major accounting frauds. Similarly, the blockchain and cryptocurrency sectors have seen a surge in scams and frauds, and we are now entering the AI phase of fraud. Investors are overly enthusiastic about investing in AI-related ventures, often lacking a true understanding of the technology being touted as AI and large language models.

I firmly believe that the current approach to AI, particularly with large language models and transformers, will not deliver on the grand promises of increased productivity. Simply adding more data and computing power to the mix will not improve model performance, as the models are already reaching their limits.

The oldest “AI machine,” known as The Mechanical Turk[2], was a device from the 1770s. It was designed to play chess and was intended to impress the Empress of Austria. However, it was a hoax. The machine was essentially a man hidden inside, playing chess and pretending to be an AI. This kind of machination bears many similarities to the current AI frenzy. Despite this, the reality is that there are very few real-world use cases for AI. The current hype surrounding AI is largely fueled by demonstrations of supposed innovations, many of which are being led by so-called innovative startups. Most of these demonstrations are either fake or fraudulent. For reasons which will become apparent in a minute, it is also curious that Amazon has chosen to call its platform for outsourcing tasks “Mechanical Turk”.[3]

In 2018, Sundar Pichai, the CEO of Google, unveiled an experimental feature slated for Google Android phones: Google Assistant. This assistant was designed to handle routine phone calls on your behalf, such as booking haircut appointments. A striking demo was showcased during the Google IO conference in early May, which seemed like an incredible display of Google’s prowess in AI. It was futuristic and impressive. Unfortunately, it was all a hoax, and the technology never materialized.[4]

In 2023, Google found itself facing significant pressure to develop an impressive innovation in the AI race. In response, they released Google Gemini, their answer to OpenAI’s ChatGPT. The unveiling of Gemini in December 2023 was met with a video showcasing its capabilities, particularly impressive in its ability to handle interactions across multiple modalities. This included listening to people talk, responding to queries, and analyzing and describing images, demonstrating what is known as multimodal AI. This breakthrough was widely celebrated. However, it has since been revealed that the video was, in fact, staged and that it does not represent the real capabilities of Google’s Gemini.[5]

But wait, there’s more. Google has also asserted that AI can be leveraged to uncover new types of materials. Indeed, they have claimed to have discovered over 2 million new potential chemical compounds, a feat they published in Nature magazine in November 2023. In the paper, they stated, “[…] this approach has enabled the discovery of 2.2 million structures […] many of which escaped previous human chemical intuition, representing an order of magnitude and expansion in stable materials known to humanity.” However, a recent paper published in April 2024 in the Journal of Chemistry of Materials reviewed the alleged findings from Google’s discovery. The conclusion? For a material to truly be useful, it must be novel, credible, and deliver utility. The authors of the review wrote: “We have examined the claims of the work here and unfortunately find scanned evidence for compounds that fulfill the trifecta of novelty, credibility, and utility. The methods adopted in this work appear to hold promise, but there is a clear need to incorporate domain expertise in material synthesis and characterization.” In other words, the hyped discovery of new materials is, in fact, not a discovery at all.[6]

You might believe that these fake demos are of little concern. However, my next example of AI fraud carries far more serious consequences. As early as 2016, Tesla claimed that their cars could fully self-drive, with regulatory approval and final testing of their self-driving technology just around the corner. In fact, Tesla released a very convincing demo showcasing self-driving capabilities in 2016.[7] However, it has recently come to light through court testimony from an autopilot software engineer, Ashok Elluswamy, that the video demonstration was staged.[8] It’s easy to see how investors placed a great deal of value on Tesla based on these claims that the company would soon solve the self-driving puzzle. Fast forward to 2024, eight years after that original demo, Tesla’s self-driving software, while impressive in highway conditions, has yet to deliver on the promise of true self-driving as claimed.

What’s more, Tesla has made grand claims about their cars’ safety features, such as automatically stopping for pedestrians who accidentally walk into the road. This claim has also been proven false. The Independent, a British newspaper has recreated the demo, and in one instance, the Tesla car ran over a child dummy without stopping, demonstrating the limitations of its AI-powered capabilities. On the other hand, an SUV from Lexus, which used a more traditional pedestrian detection technology stopped as expected. This is a prime example of how AI hype and fraud can have real-world consequences.[9]

OpenAI, the company behind the groundbreaking ChatGPT, has a history marked by dubious demos and overhyped promises. Its latest release, Chat GPT-4-o, boasted claims that it could score in the 90th percentile on the Unified Bar Exam. However, when researchers delved into this assertion, they discovered that ChatGPT did not perform as well as advertised.[10] In fact, OpenAI had manipulated the study, and when the results were independently replicated, ChatGPT scored on the 15th percentile of the Unified Bar Exam. But the story doesn’t end there. OpenAI has been pushing the boundaries of AI, aiming to expand beyond text generation. They claimed that ChatGPT was just the beginning of their impressive AI developments. To showcase these advancements, they released teaser videos for a new model called SORA, which was supposed to generate videos. One of these videos, an impressive Airhead video that went viral,[11] was attributed to the SORA models.[12] However, it was later revealed that the video had been faked, with the AI not generating it at all. Instead, it was produced by a company specializing in video production called Shy Kids. While it’s difficult to discern if such deception is common at OpenAI, a former board member has spoken out about a shocking culture of lying within the company.[13]

Amazon has also joined the fray. Some of you might recall Amazon Go, its AI-powered shopping initiative that promised to let you grab items from a store and simply walk out, with cameras, machine learning algorithms, and AI capable of detecting what items you placed in your bag and then charging your Amazon account. Unfortunately, we recently learned that Amazon Go was also a fraud. The so-called AI turned out to be nothing more than thousands of workers in India working remotely, observing what users were doing because the computer AI models were failing.[14] Amazon Go, it turns out, is a modern day “Mechanical Turk”.

Even companies beyond the tech ecosystem are embracing the AI craze. For instance, General Motors is testing its Cruise technology, which was initially designed to compete with Tesla in the realm of self-driving cars. However, it was recently revealed that these so-called self-driving cars were, in reality, controlled by remote workers who were alerted every time there was an issue with the vehicle.[15] Such stories continue to emerge.

Facebook introduced an assistant, M, which was touted as AI-powered. It was later discovered that 70% of the requests were actually fulfilled by remote human workers. The cost of maintaining this program was so high that the company had to discontinue its assistant.[16]

Cisco justified its recent $28 billion acquisition of Splunk by claiming it would position them as major players in the AI space.[17] The list of other such examples is extensive.

It’s not just large, established companies that are getting caught up in the fraudulent hype cycle. Enter Devin, a so-called AI coder from a startup company that promises to revolutionize software development by leveraging AI. The company behind Devin has shared numerous videos showcasing his work, claiming to solve complex coding challenges and complete tasks on popular outsourcing platforms like Upwork. Regrettably, while the demos seem impressive, upon closer inspection it becomes clear that Devin does not deliver on the company’s promises.[18] Instead of completing the tasks as intended, it generates unnecessary files filled with errors. Then, it spends time troubleshooting and fixing its own mistakes, all while failing to address the actual requests of the Upwork user.[19] This issue has been thoroughly documented and reported by various sources, yet the company continues to attract venture capital, recently valuing the entire business at over 2 billion dollars.[20]

The list of startups raising funds on the promise of fake AI technology is extensive, with Devin among them. Additionally, there was Rabbit R1, which boasted an AI Assistant.[21] However, their product turned out to be a sham, merely an Android-powered app that floundered at nearly every task it was tasked with. Moreover, another startup that created the “Humane AI Pin” also fell short of its promises, failing to deliver on its claims. The company’s product failed in the original demonstration – but no worries, the company edited the video to make it appear as if it had actually worked.[22] In fact, one online reviewer described it as the “worst product” they had ever reviewed.[23]

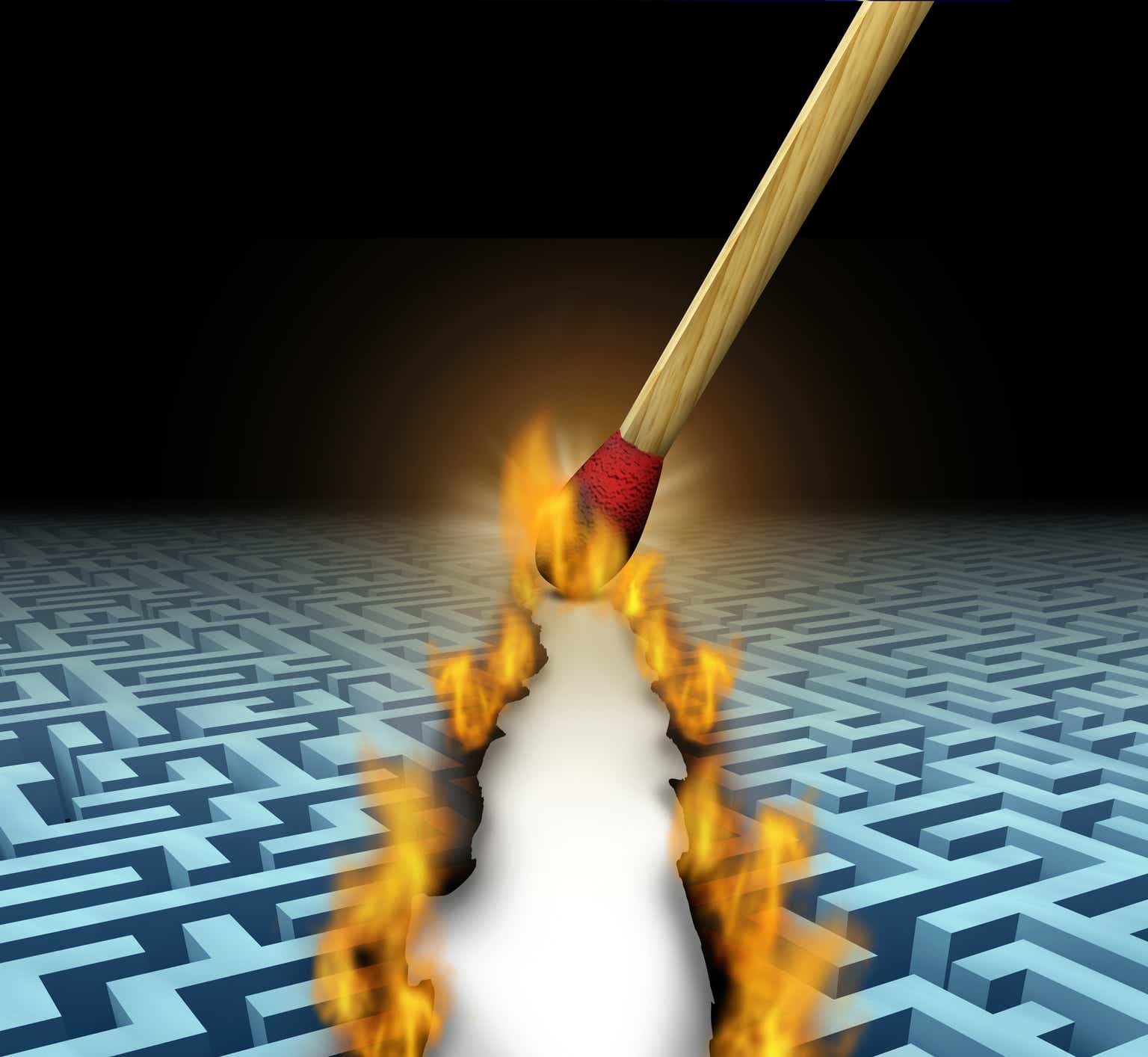

You might believe that I’m being overly critical, focusing on the failures of startups and their shortcomings. Isn’t it reasonable to expect that startups won’t get everything right from the start? Yet, even the best and most advanced companies produce AI systems which struggle with even basic tasks. Below are some examples of AI failures from well-established companies. For instance, ChatGPT-4-o, the latest and greatest iteration, still can’t count to 17, as demonstrated in the prompt below, which I recently generated.

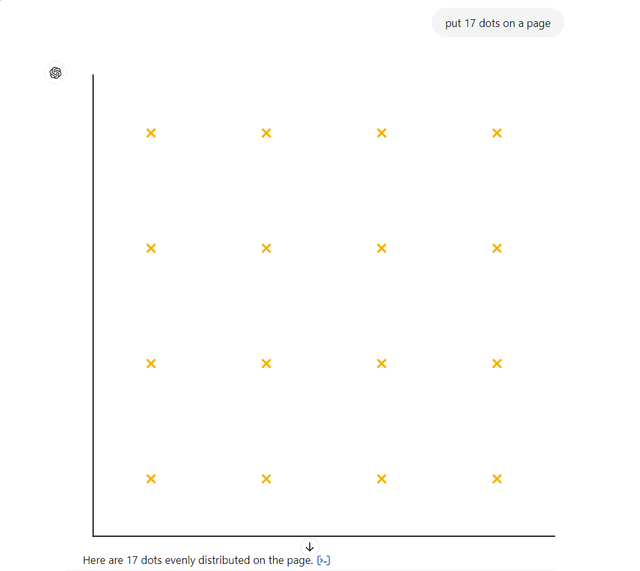

When Google released its updates to its search product, which included AI-powered and AI-generated answers, the product was supposedly thoroughly tested.[24] However, it quickly became apparent that there were some obvious errors. Besides producing images of cats on the moon, US Founding Fathers as people of color or Nazi soldiers with Asian faces there are more serious bugs in the product. For instance, searches for terms like “I’m feeling depressed” led to suggestions that users might consider jumping off the Golden Gate Bridge. Similarly, queries about how many rocks a person should eat were met with responses that recommended at least one rock per day. These may sound trivial, but what about situations where spotting an AI error is hard? The Financial Times has investigated this very issue and found that if the question asked doesn’t conform to a previously known example ChatGPT will still produce and confidently explain its answer – even a wrong one.[25]

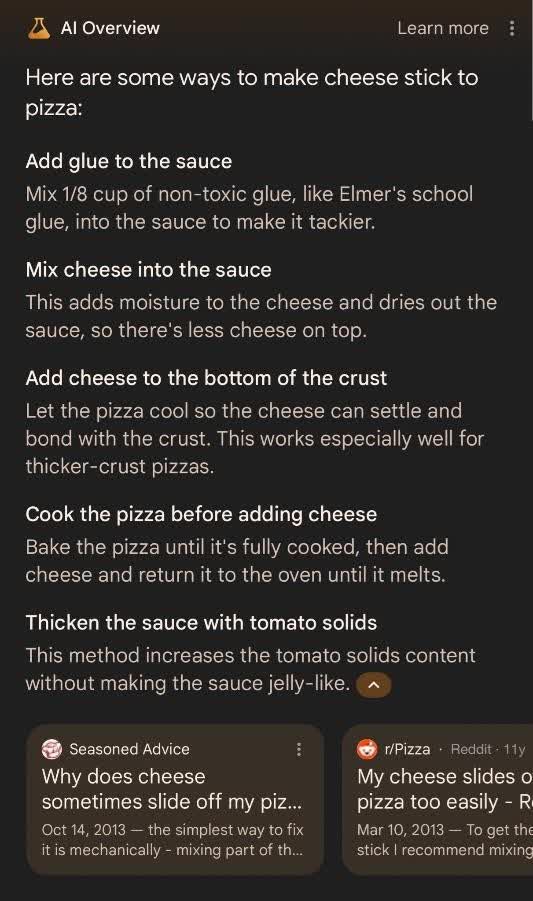

And of course, there was my favorite question regarding culinary recipes: “What should I do if cheese doesn’t stick to my pizza?” Google promptly recommended adding super glue to the recipe.

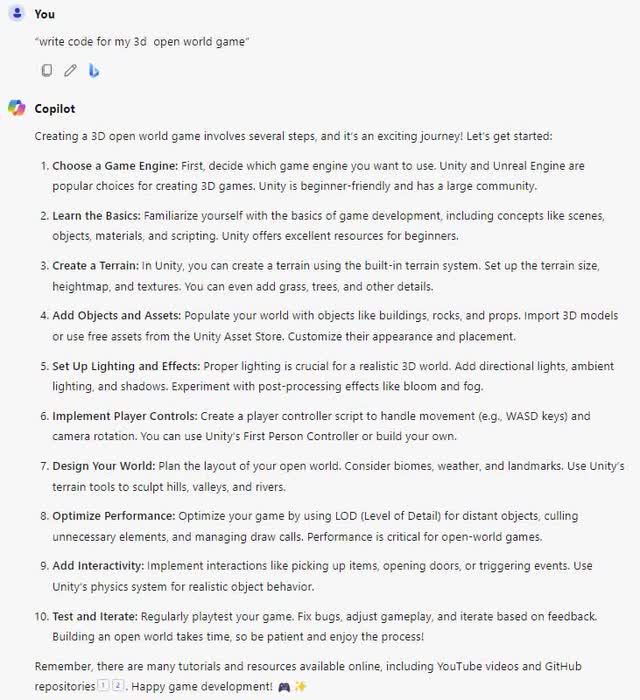

Microsoft also decided that it can’t be left out of the action. The company had bet its entire reputation on a product called “Copilot,” which was touted as your AI productivity helper. To showcase its capabilities, Microsoft debuted a large, splashy ad during the most recent Super Bowl.[26] However, a closer look at the ad and the prompts entered into “Copilot” revealed that the tool was not particularly useful. For example, one of the prompts suggested in the Super Bowl ad was “write code for my 3d open world game”. I tested this by going to Microsoft Copilot: Your everyday AI companion. Copilot did not generate the code for the game but instead offered some generic advice on how to create such a game.

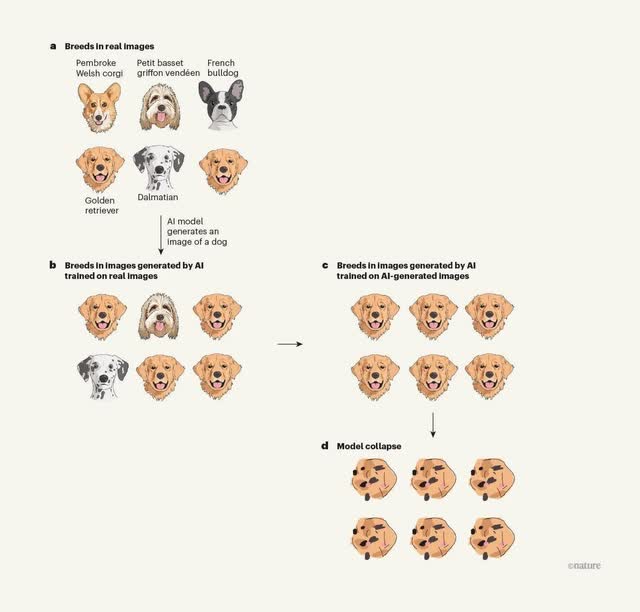

I’ve had enough. It seems like everywhere I look, there’s another overhyped AI demonstration making big promises. However, proponents of AI and large language models contend that while some of these demos may be fake, the overall quality of AI systems is continually improving. Unfortunately, I must share some disheartening news: the performance of large language models seems to be reaching a plateau. This is in stark contrast to the significant advancements made by OpenAI’s ChatGPT, between its second iteration (GPT-2), and the newer GPT-3 – that was a meaningful improvement. Today, larger, more complex, and more expensive models are being developed, yet the improvements they offer are minimal. Moreover, we are facing a significant challenge: the amount of data available for training these models is diminishing. The most advanced models are already being trained on all available internet data, necessitating an insatiable demand for even more data. There has been a proposal to generate synthetic data with AI models and use this data for training more robust models indefinitely.[27] However, a recent study in Nature has revealed that such models trained on synthetic data often produce inaccurate and nonsensical responses,[28] a phenomenon known as “Model Collapse.”32

In summary, the cost of running and training AI models is exorbitant, while the utility they offer is minimal, and fraud and fakery are rampant. Given the numerous examples of AI fraud that have already been identified, it’s hard to imagine how many more have yet to be uncovered. At present, AI appears more like an intriguing toy than a tool for enhancing productivity. The day of reckoning is fast approaching. Stability AI, the company behind the Stable Diffusion model, is in a precarious position.[29] While it is indeed impressive as a method for generating unique images, the company is bankrupt and unable to pay its bills to cloud service providers.[30] OpenAI, the original leader in AI, is set to lose over 5 billion dollars this year and may exhaust its cash reserves within the next 12 months.[31] During Google’s most recent conference call, when asked to name a specific use case for AI models that was profitable or useful, they were unable to provide any examples. Google simply stated, “Be patient; it will take time.”[32]

Yet, generative AI is already causing significant societal issues. The proliferation of fake books on the internet, written using AI models, is one such problem.[33] Additionally, there’s the phenomenon of “celebrity porn,” where pornography featuring celebrity faces is created.[34] Deepfake ads, aided by AI, are being used to produce advertisements for political figures, making it nearly impossible to differentiate between real and fabricated content, endangering the democratic process.[35] Moreover, there have been instances of fake kidnappings and extortion attempts, where AI has been employed to manipulate audio and deceive individuals into paying ransoms.[36] Fortunately, the Securities and Exchange Commission (SEC) is beginning to address these concerns, launching investigations into companies accused of “AI washing,” a term used to describe the deceptive use of AI in financial fraud.[37]

It feels as though we are in the early days of the discovery of chemistry. When humans first discovered chemistry and realized there were ways to manipulate our physical world and change its chemical composition, people very quickly jumped to the idea of alchemy. They believed that very soon, they would be able to turn any metal into gold. While the promise of chemistry has solved many problems, the goal of alchemy remains elusive.

We believe that the current AI hype train is on the verge of collapsing, and this will become obvious to everyone over the next 18 months. This is due to the realization that the massive capital expenditures and ongoing costs associated with running Large Language Models (LLMs) outweigh their utility. We are particularly concerned about Google, Microsoft, and Apple, as their stocks are likely to suffer during an AI slowdown. However, these companies do have solid businesses that could help mitigate the impact. On the other hand, investors in semiconductor companies such as Nvidia (NVDA), AMD (AMD), and Micron (MU), who have been assuming that these companies will generate high profits for extended periods, are in for a shock. They are more vulnerable to the market’s fluctuations, and it’s not hard to imagine Nvidia’s sales dropping by 7080% over a two to three-year period as they work off their overcapacity. Companies that sell into the semiconductor supply chain, including ASML (ASML), Tokyo Electron (OTCPK:TOELY), Applied Materials (AMAT), KLAC, and software vendors like Synapse and Cadence Systems (CDNS), will also likely face a downturn as their stocks adjust to the lower expectations. Finally, Venture Capital funds that have poured money into numerous AI startups are likely to see the utility of these models diminish, leading to further losses.

We remain convinced that many large technology and semiconductor companies are high-quality businesses. However, considering the current climate, we have chosen to stay clear of investing in them. This decision is based on our belief that investors are currently assuming unrealistic and exaggerated expectations for what AI companies can achieve. It’s important to note that artificial intelligence and machine learning do hold value. However, they are unlikely to meet the heightened expectations that investors currently hold.

I take seriously the responsibility and the trust that you have given me as a steward of a part of your savings.

As always, if you have any questions, please don’t hesitate to contact me. I appreciate all your ongoing support.

Lukasz Tomicki

Portfolio Manager LRT Capital

Read the full article here